NVIDIA H100 vs B200:

AI Training Dominance

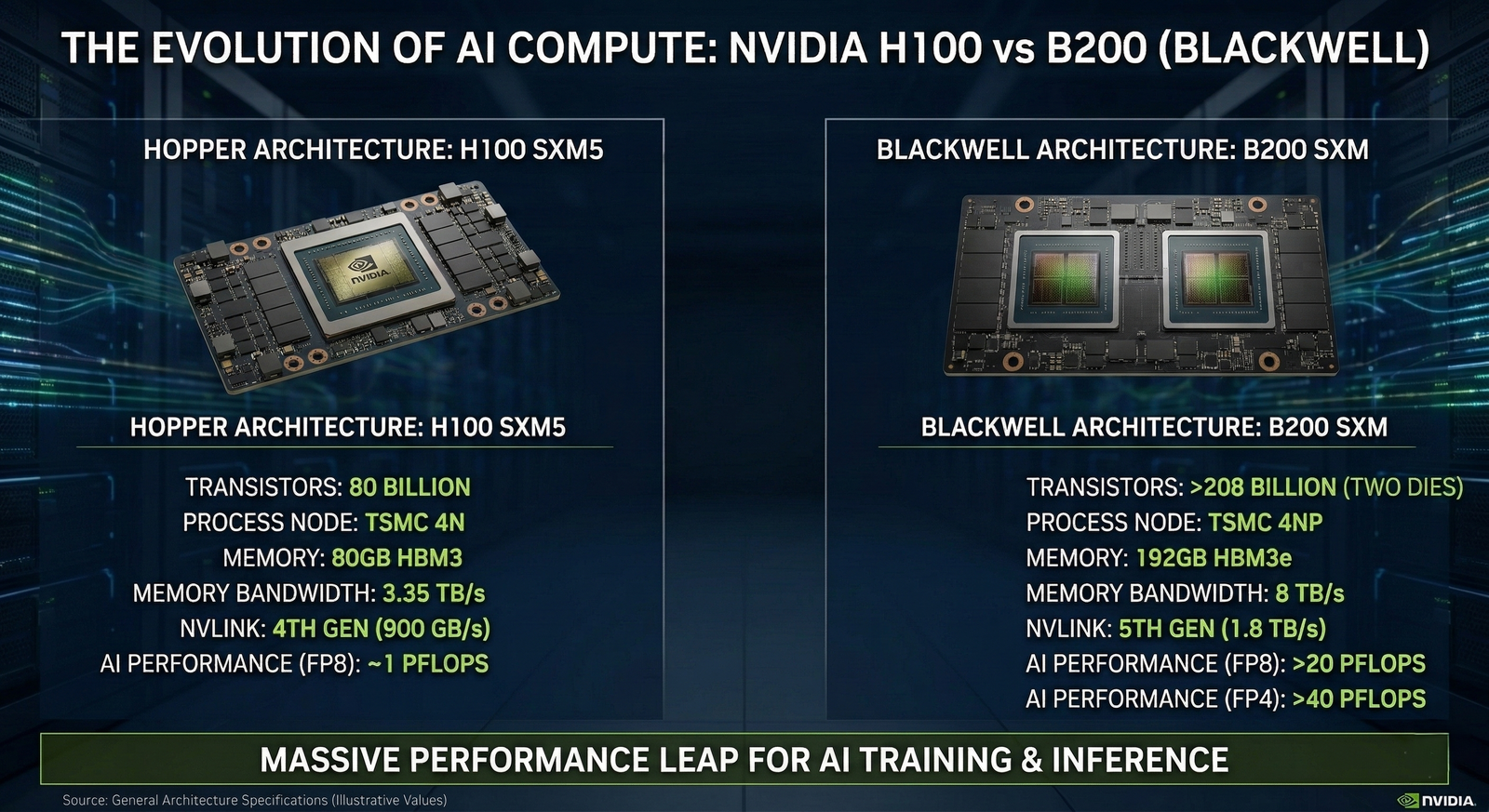

As trillion-parameter AI models become the new industry standard, the debate between the established NVIDIA H100 (Hopper) and the revolutionary B200 (Blackwell) has reached a fever pitch. At BLD Quantiva, we analyze which infrastructure yields the highest TCO efficiency for your compute cluster.

The H100 Era

The H100 remains the most reliable workhorse for models up to 500B parameters. Its FP8 precision and mature ecosystem allow for immediate, risk-free deployment in existing data centers.

The B200 Leap

Blackwell introduces FP4 precision, delivering 20 PFLOPS of compute. For trillion-parameter scaling, B200 reduces power consumption by a staggering 75% compared to its predecessor.

Why Architectural Choice Matters

Transitioning to Blackwell is not just about raw speed; it's about the 5th Gen NVLink interconnect. With 1.8 TB/s of bidirectional throughput, B200 eliminates the data bottlenecks that often plague massive GPU clusters. For AI startups, this means cutting training time from months to weeks.

Deploy Your Next Cluster

BLD Quantiva provides rigorous military-grade testing for H100 and B200 configurations. Ensure your infrastructure is ready for the future of generative AI.

Consult with Experts